A Reddit post claims Anthropic suspended an entire organization without warning:

everyone in our org woke up to emails saying that their Claude accounts had been suspended (~110 users).

And later:

none of our admins can actually view usage or billing, because our email addresses were banned.

I do not know the full story here. Maybe there was a real policy reason. Maybe it was automated. Maybe it was a mistake.

But from a business workflow point of view, the exact reason almost matters less than the blast radius.

If one provider can suspend your whole org and your team loses:

- chats

- projects

- artifacts

- admin access

- billing visibility

- daily workflow muscle memory

then the problem is not only the ban.

The problem is that the workflow was too tied to one vendor.

This is why I do not like vendor-specific AI UX as the center of a business workflow. It is fine to use Claude, ChatGPT, Gemini, or anything else. The risky part is training the whole team to live inside one provider’s app.

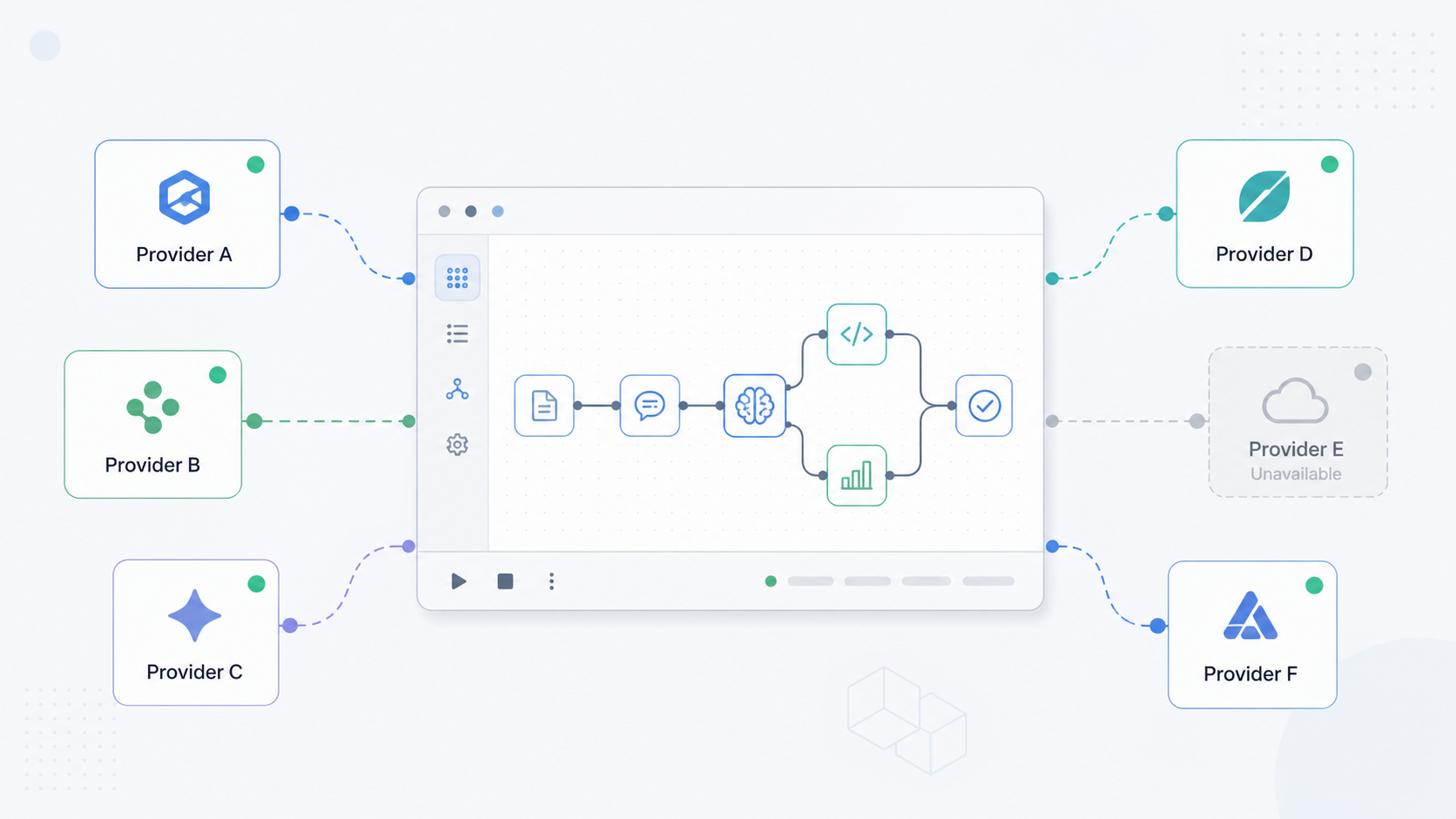

The better pattern is:

- learn one workflow

- keep your data portable

- switch models when needed

- use local models when privacy or continuity matters

- use online models when quality or speed matters

This is part of why I built Msty.

I wanted one simple place for AI work where the workflow belongs to me, not to one model provider. Local models when privacy matters. Hosted models when they are better for the job. Same workspace either way.

That is the part that matters to me: own the data, keep the workflow simple, and make the model replaceable.

Not “never use Anthropic.” Claude is very good.

The point is: do not make any one provider the place where your workflow, memory, data, and team habits all live.

Use the model you want today. Keep an exit path for tomorrow.