Prompt: Create a realistic editorial image for a technical blog post about using an AI agent to test a desktop app. Show a macOS desktop app window with a nearby QA checklist and bug report notes. The image should feel practical, calm, and developer-focused. Avoid robot imagery, futuristic AI effects, and glossy marketing visuals.

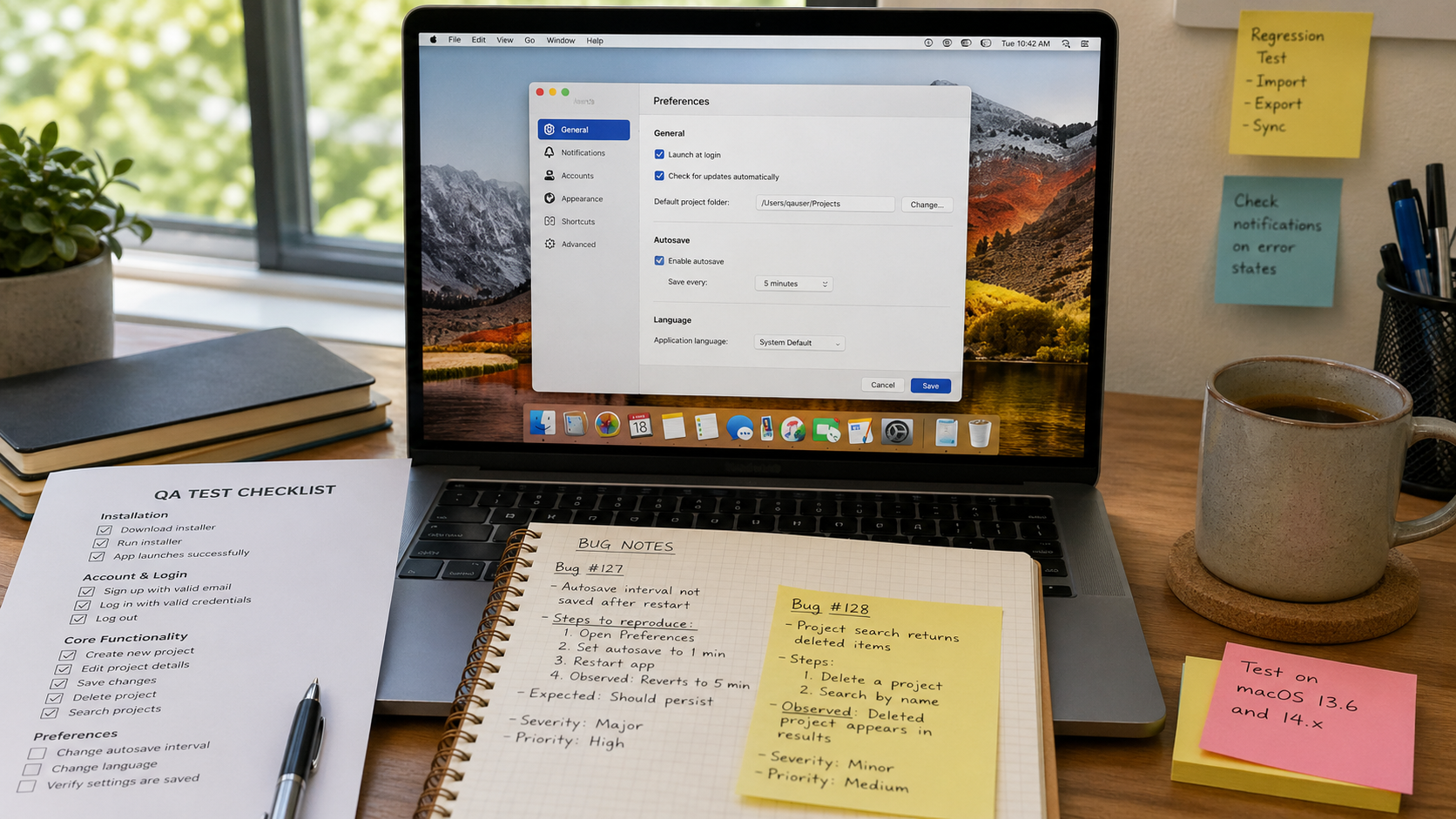

OpenAI’s Codex docs now have a use case for QA your app with Computer Use:

Click through real product flows and log what breaks.

That sounds obvious, but it has been very useful for testing Msty Claw, our Tauri desktop app.

Writing code with LLM agents has become fast enough that QA is now one of the tighter bottlenecks. More people on the team can ship code now, including people who were not writing code before. That is good, but it also means more things need testing.

Computer Use helps because it can test the actual desktop app, not just the code.

My current workflow:

- Build the feature.

- Do my own quick manual pass.

- Ask the same Codex session to write a QA prompt with gotchas, edge cases, and things to watch.

- Send that prompt to a separate Computer Use session.

- Watch it click through the app and report what breaks.

The important bit is that this is not just screenshot automation.

Codex can also read the code. It can check whether behavior looks intentional. It can inspect logs or the database if the prompt allows it. It can ask follow-up questions. That makes it much more useful than a blind UI clicker.

The Codex docs say Computer Use is for cases where command-line tools or structured integrations are not enough:

Let Codex use desktop apps while it works

That is exactly the gap for desktop apps.

Browser testing has had decent automation for a long time. Desktop app testing has always felt heavier. For us, Computer Use makes the loop much easier.

One recent example: I had it test upcoming multiple-directory support in Msty Claw.

Two things stood out:

- It tried one model, noticed tool calls were not working well, and switched to a better one.

- It created two folders named

docsin different locations and noticed the UI became confusing because the app showed only the folder name while truncating the full path.

That second issue is the kind of thing a lot of people would miss. It is not a crash. It is not a failed happy path. It is a small UX ambiguity that only shows up when the tester is being annoying in the right way.

That is why this feels useful.

Not because it replaces QA. It does not.

It gives me another tester that can:

- Run through real flows

- Try weird inputs

- Notice UI ambiguity

- Read the code when needed

- Produce repro steps

- Keep going while I do something else

For desktop apps, that is a big deal.